1.4/50 Summilux ASPH, Leica M10P, RAW

Midjourney and ChatGPT, two powerful applications, have emerged in rapid succession, and so-called Generative AI based on the Diffusion Model or Transformer architecture is a hot topic around here and there. Midjourney, which attracted a lot of attention for its ability to generate more and more images, is more on the creator side, but when ChatGPT, which returns answers in an interactive manner, was released at the beginning of December, it became a topic of considerable discussion in the Skill Definition Committee of the Data Scientists Society of Japan due to its ability to answer questions. I was also quick to advise the students in my lab, "You guys should use it without thinking too much. Without using it, you will not understand its greatness, its challenges, or anything else.

Then, two weeks ago at a seminar, a student who was about to graduate said to me,

“I can't live without ChatGPT. I make ChatGPT do all my assignments, my emails, ChatGPT can do SQL, ChatGPT can do diagrams. But when I ask ChatGPT to cite a paper, ChatGPT generates a fictitious paper and cites it.”

He literally uses ChatGPT as his "new servant" and makes ChatGPT write codes, translate, draft reports, and reply to emails to people who are a pain in the ass. The student have ChatGPT cite papers, and he can spot where ChatGPT is making up stuff that doesn't really exist. It's quite impressive.

In parallel, when the US Medical Licensing Examination (USMLE) was solved by the ChatGPT, reports emerged that it scored at or near pass level without any special training, and that it also had high levels of agreement and insight in its explanations. It seems obvious that this is a good match for medicine, where reliable information is available, but it is also likely to be a major factor in the training and future of intelligent professionals.

That said, a significant number of students at Stanford University are already using ChatGPT. According to an anonymous survey conducted from 1/9 ~ 1/15 (N=4,497), just over a month after it appeared, around 17% of student respondents used ChatGPT for fall quarter assignments and exams, according to an article in The Stanford Daily (founded in 1892) about five days ago.

This is not surprising for Stanford, which is located in the middle of Silicon Valley.

Although university spokesperson Dee Mostofi says in the article that "Students are expected to complete coursework without unpermitted aid”, "In most courses, unpermitted aid includes AI tools like ChatGPT."

In this phase of discontinuity, it is more important for those who create the future to use it and get a feel for the implications of it more than anyone else, rather than simply following the rules and remaining ignorant of them.

This should be certainly the case at UC Berkeley, the rival school across the Bay, as well as at Carnegie Mellon (CMU) and MIT, the four major computer science meccas, along with these two schools.

And now, in a bit of a milestone, Microsoft has announced a major investment in OpenAI, a major player in this field. The implications of this in itself are quite interesting from an industry perspective, but will not be discussed in this article.

-

Back to the topic at hand, the emergence of generative AI tools indicates that education, work, and everything else needs to change . As I wrote in Harvard Business Review Japan (HBR Japan) more than seven years ago, humans are creatures who use everything and anything technology that is created. (This is when artificial intelligence became a hot topic so rapidly and the views were so confused that I was asked to organize a discussion on how we should think about AI, including its implications for society and business.)

At the end of the 20th century, when "search" was invented at the Stanford campus in Palo Alto, it was said that the value of simply providing answers was disappearing, and this is a sign that we are entering a new era. From this perspective, the current education system, in which students are given many questions in cases where there is a fixed answer, and compete to give the correct answer as quickly as possible, is really approaching a pointless world. This is because machines are better at this, and we are entering an age in which we are more likely to leave it up to them. (On the other hand, the ability to dig into questions that have no starting point is more important than ever.)

According to the Stanford Daily article above, one subject now requires "If you choose to use an AI agent for generating portions or aspects of an assignment, you must disclose this use and cite it in the same manner as you would cite any external source.” Some other subjects have reverted to paper and pencil exams in response to the impact of the ChatGPT.

It is true that there are many cases in which you need to have knowledge like anatomy in medicine crammed into your head to make immediate decisions in the field, and the confusion in higher education in this area will continue for a while, but I believe that it would settle down after a year or two.

Be that as it may, this change means that the ability to formulate meaningful questions, evaluate the answers produced, and provide correct questions and instructions has become critically important. In a real sense, we have entered the age of “liberal arts,” and this also means that we have entered the age of refining "perception," which was the conclusion and core concept of my discussion regarding the essence of intelligence in the past on HBR Japan.

The ability to understand various values and beauty in a complex and vivid way, a sense of beauty based on this, a heart that wants to have a certain thing, and a vivid sense of knowing that this is not good enough, are really the key to success in the future with these Generative interactive AIs. The starting point is to feel deeply and vividly with the body, such as by stroking and licking.

As I discussed with Dr. Yoichi Ochiai at Weekly Ochiai at the beginning of the year, Japan's elementary and secondary education system, which mainly provides almost the exact opposite education, has the potential to become a device that produces a large number of "high IQ people who are simply put to work" if drastic changes are not made. Even though there are many aspects that students will hack on their own, if they are not given considerable freedom in elementary, middle, and high school, their ability to generate questions and to feel and evaluate things in their own way will be considerably damaged.

As you will soon see, ChatGPT is very different from so-called "search. While it is possible to use ChatGPT as a search tool by typing in the words you want to know, this is not an approach that unleashes the power of this Large Language Model (LLM)-based tool, because search is better and more accurate at such things, and LLM-based tools are far better at them.

This is because search is better and more accurate at such things, and there are other things that LLM-based tools are overwhelmingly better at. (Some of you may remember that Galactica, the AI tool that Meta introduced as if it was an AI that could answer everything, went on a bit of a hiatus after three days.)

Search, which is arguably the most used AI-based tool today, is extremely strong in tapping out what the answer is. It can search even behind the scenes around the world and give you the answer in an instant. And it is highly reliable. In reality, it is a process of looking up digitized information in advance to determine its reliability and then organizing it, but if done well, it can arrive at the answer you want at a shockingly fast pace.

ChatGPT is a Chatbot that provides a general direction for things you want to know, or things you want to do but don't know what to do, in other words, things you couldn't get answers to before unless you tried to figure it out yourself or involved people who know what they are talking about. Moreover, answers are generated in real time. This is a unique and moving experience, just like when you are asking something to a human being. I also like that different answers are generated each time. If you give the right instructions, you can even have ChatGPT write programming code.

This is true not only for text, but also for images, music, and other generative systems in general, but the speed at which this new machines creates something is incomparably faster than that of humans (see Ochiai's demonstration in Weekly Ochiai). What this means is that many people are approaching a state similar to Michelangelo, who had a large number of disciples and devoted his energy to directing and finishing their work.

The problem is that things are said that are not necessarily correct, and as my students have pointed out, are often somehow made up or pieced together with similarities (the generative system itself is inherently creative, if you think about it). The same is true of papers, and facts. When I acquired the building blocks (tsumi-isi) in the image above, I asked, "What are the similarities and differences between “Sai no Kawara”(the Children's Limbo) and The Myth of Sisyphus?" and got the following answer

In Greek mythology, the Sai no Kawara is the place of judgment for the dead. Those who have done evil are cast into the underworld, while those who have done good are accepted by Elias.

On the other hand, "Sisyphus" is the place of judgment for the dead in Egyptian mythology. The dead are judged by Osiris, and those with light hearts are brought back to life, while those with heavy hearts are cast into the underworld.

Both depict the place of judgment that the dead undergo, so there is a similarity, but there is a difference because the person or method of judgment is different.

This is quite an interesting answer, but it clearly confuses the Buddhist worldview of the Sai no Kawara with Greek mythology (Sisyphus) and Egyptian mythology.

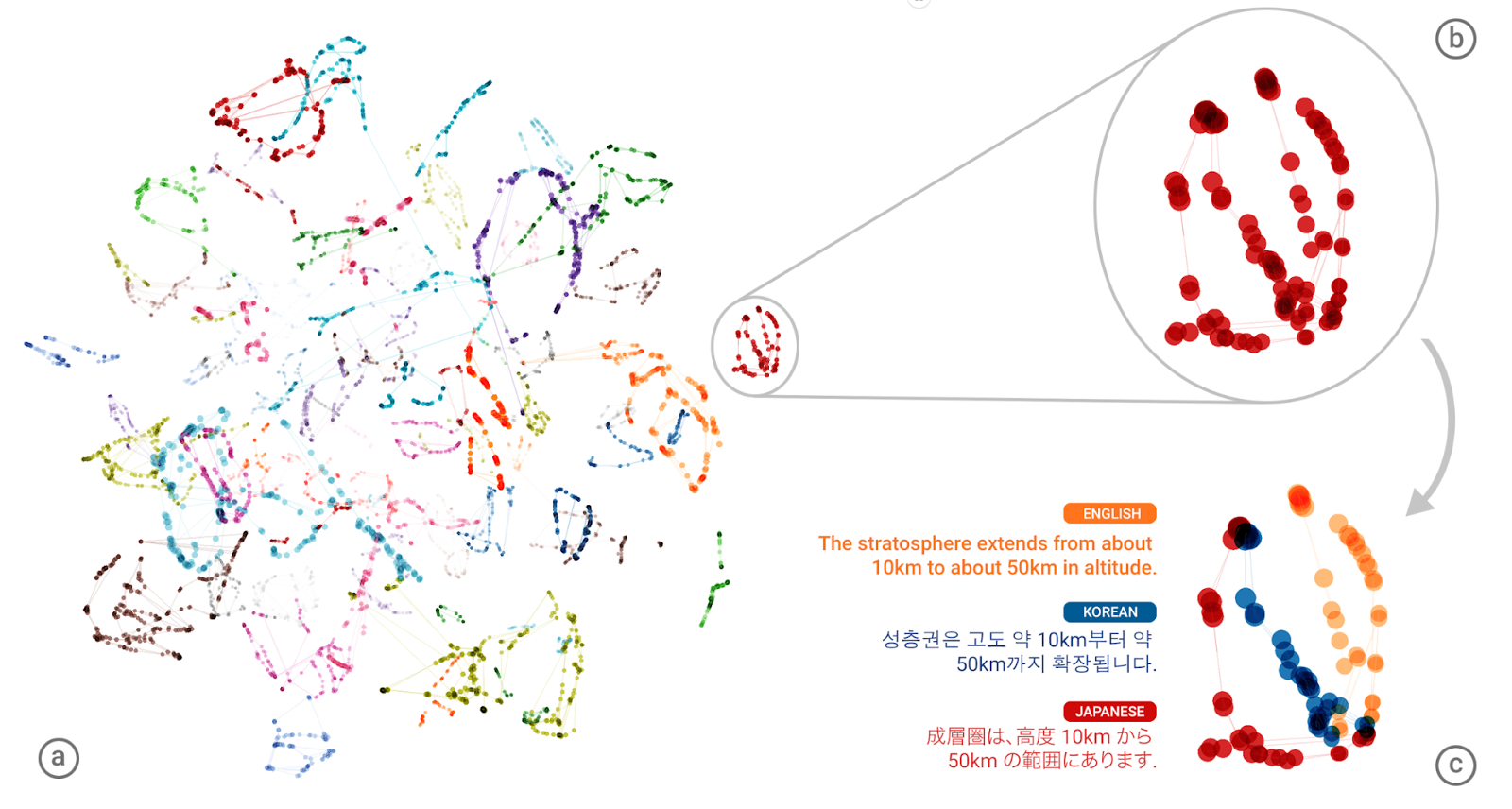

However, this is to some extent unavoidable considering that many phrases and meanings in LLM are represented as vectors in a multidimensional space. The following presentation on Google translate will give you some idea of the representation in multidimensional space.

https://arxiv.org/pdf/1611.04558v1.pdf

However, it is even a little impressive that "Sai no Kawara" is a concept that is quite close to "Sisyphus" in terms of vector space. Perhaps it is because we are only a few steps away from the discovery of similarity as in humans, the extraction of meaning from it, and its extension from some kind of idea and analogy.

In fact, the largest use (about 60%) of the Stanford students who used ChatGPT in the previous article was as a brainstorming partner. Even Stanford students, who are usually close to experts and people who know a lot about most things, are not likely to ask people for something like this kind of college homework. However, most of our daily ideas start with something that is almost unimportant. And when we ask, we get something back from ChatGPT almost instantly. A messy answer is not a bad thing. People are more messy and more random, but communication is still possible, and something interesting can come out of such dialogue.

I am not the only one who feels that this is leading to something great.

One of my greatest joys is to imagine something more by connecting things that are not normally connected, and I am now in possession of another new tool.

Now, with new tools in hand, let's go back to the real world.

ps. For a sequel, click here.

kaz-ataka.hatenablog.com

Note: This blog entry is based on the original Japanese entry translated by DeepL (also an LLM-based AI tool) with some minor modifications.